Humanoid Robotics: First-Entry != Winner

Generalist Humanoids aren't generalist without biological-first infrastructure.

With Figure’s recent announcement publicizing their monster raise, theories surrounding the future of generalist humanoid robotics are heating up. With their newfound OpenAi partnership, the company will have access to the most innovative multimodal models, a seasoned team of veterans, and $625M in funding. Yet, Figure won’t win the long term race towards widespread general-purpose assistants . Neither will 1x, Tesla, or Boston Dynamics. Each of these anticipated industry leaders build for a variety of systematic, niche approaches (manufacturing, warehouse production, dangerous jobs, etc) to create as quickly as possible and be first entry into market. The issue - none of these likely incumbents actually train robot brain models the right way… using core biological principles. In a space which has proven to be incredibly time and resource consuming, building infrastructure for future, generalizable use cases is often overlooked. Just as Apple quickly trampled Microsoft with the release of the App Store in 2008, the future of humanoids involves robots having the ability to perform multiple, compoundable actions on a single platform.

With the futuristic market grasping everything involving human labor (through cheaper cost or energy spend), there will be multiple winners in the space. Having the ability to produce goods at 2X the speed and 1/2 the cost does indeed make integration into specialist cases a no-brainer. However, consumer facing humanoids will need to have the capability of doing your laundry, baking cakes, and purchasing items at the grocery store. In fact, the average human performs around 33 specific actions every day. We as a species also utilize ~300 joints daily. If there was a more efficient way to use human joints for our core actions, Darwinian principles state that we would have already evolved as a species to adapt. And even if not - the world as we know it today is built for the human form. It doesn’t take a rocket scientist to understand that applying machine learning is the right answer instead of programing all actions directly. For the generalist humanoid ‘App-Store’ of the future to be actualized, the robot's need to have the ability to actually perform such actions (crazy idea, I know).

The current giants will certainly capture billion dollar, niche markets first. Yet - they are not on track to actually produce the consumer-facing ~10 year vision. Figure shares a focus on designing humanoids for corporate tasks targeting labor shortages and jobs that are undesirable or unsafe. 1x obsesses over creating humanoids with fluid movements, but pull all training data from static, production facilities. Boston Dynamics has programmed them to jump, but hard codes the algorithm into the brain. To build a general purpose humanoid, one must instead go bottom up and utilize biological principles to train the brain model. The reason no-one has tackled this problem is because of how immensely difficult is is. Combining motor skills, balance, and machine learning, this is one of the most difficult research problems of the century.

Training LLM’s in the robot brain will be a visual task moving forward. There is no better way to learn an action rather than watch it live, analyze a video, or shadow an example. I acknowledge current deficiencies in the abilities of computer vision, but infer that the ability to process such giant clunks of data will be there by the time humanoids enter the market. Therefore, we are faced with two trillion dollar opportunities: a) creating the base model able to translate CV input into motor action output, b) creating a machine which has and performs the actions of the 300+ commonly used human joints.

Do all joints and muscles need to have their full capabilities upon production? Unless we have an additional 10 years, it’s unlikely. Take the current process of learning motor skills and cognitive functionality at youth. Infants are equipped with several reflexes necessary for survival at birth, yet learn the majority over the first two years of their lives. Within the first few weeks these reflexes are replaced with voluntary movements or motor skills as they began to explore functions of increased difficulty. Thus, we can draw direct parallels to prove that fluid motion needs to be learned and not hardcoded. As such, there is a need for adaptability in real time based off of what function is asked.

Current leaders have been impressive in creating the functionality to walk and adjust without a tether. The dynamic walking problem is largely solved: there are no points in the robots gait cycle where abrupt stops would cause the robot to fall due to it’s center of mass constantly adjusting to a safe positioning above solid ground. Although the solution utilizes the base principles of momentum, it was solved through constant trial and addition by parts. Aka it’s still hard coded. Babies primarily learn how to walk due to the realization that walking helps them move more efficiently. They quite literally perform a variety of A/B testing until they figure out what is the optimal way to carry out an action. For robots to exhibit this base level of cognitive learning, they must be built of similar composition and learn how to walk on their own through processing visual data, and replicating it through constant trial an error until perfection. Walking is just one example; providing machines the functionality to learn and execute independently creates self-learning machines and wins the space. When performing physical actions in synthesis to human mobility, the closer humanoid learning is to human learning, the deeper the ability to self-train and execute difficult actions becomes.

To argue against myself, these companies are on track to work. Yes, specialist machinery will be first into market and win significant (billions of dollars of) market share. But beware - current practices don’t transfer to general purpose - the future of generalist-purpose robotics is driven by machines built on biological principles. Markets have shown that the generalist does usually win. Niche skill performance is increased substantially if compound actions are learned from the ground up rather than simply trained onto a divergent order of operation.

Training these models from the ground up is a much more difficult and time-intensive problem. Existing companies are not pursuing it due to the trade-off of missing out on billions of dollars of specialized revenue. It’s one thing to capture single-use-case functionality versus bringing a new Computer-Vision model into the industry. Why wouldn’t Figure build and capture such markets instead of going the less probabilistic route? The company was only able to create the “I’m hungry” demonstration after $100M in funding. They are still at the bottom of the staircase when it comes to producing self-learning humanoids. Another clear reason to not become generalizable lies in production cost. The median existing humanoid is in the ~6-figure range to manufacture. Simple year by year mathematics states that profitability is only achieved through selling to larger enterprises and eliminating human labor. Instead, the consumer market begs for a cheaper, more malleable option, that acts as a supplement to user behavior. There is a clear gap for raising general productivity in hands on work; not just replacing jobs in industry as the current robots suggest.

To overcome these bottlenecks, specialist humanoid companies would need new training infrastructure to what exists today, which limits initial opportunity of market entry, or begin creating generalizable models in a branch parallel to their current work, thus catching themselves in the innovators dilemma. I truly believe that both solutions will not be accomplished by these players and it’s a new startups opportunity to seize.

The startup which systematically solves the Computer-Vision problem will become the Nvidia of Robotics. There is a higher probability in achieving this research-based solution rather than the tangible biological buddy due to limited startup funding. Initial training would replicate the learning of an infant today. Upon rough first design, the robot should be able to break down action through a human worn VR headset simulating it’s vision parameters. Naturally, the progression following involves training directly from a larger database of videos until immediate camera observation produces similar output.

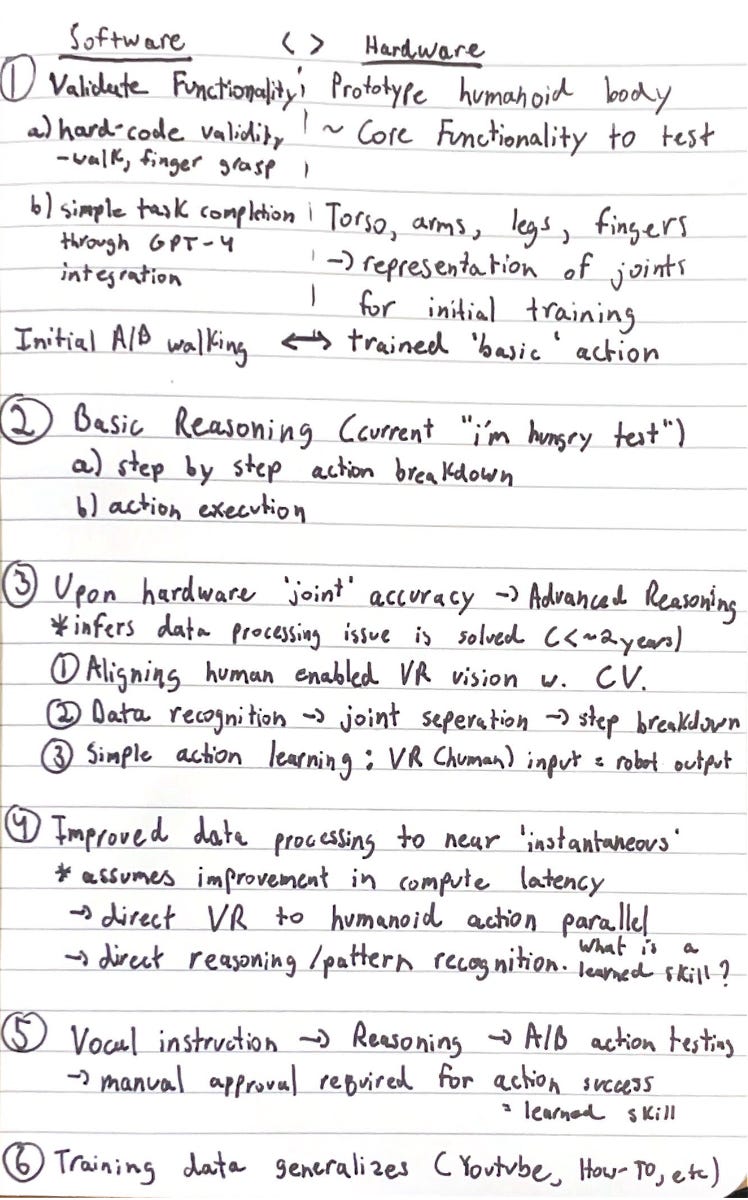

I would propose the following high level plan for building this model.

It’s a similar thesis comparing Tesla to every other self-driving car company (see Tesla real-time insurance). Building the best infrastructure preserves the companies moat for years to come. Keep an eye on your friendly neighborhood humanoids, they may indeed solve the base research dilemmas related to CV and motor functionality.

![25 Revolutionary Robotics Industry Statistics [2023]: Market Size, Growth, And Biggest Companies - Zippia 25 Revolutionary Robotics Industry Statistics [2023]: Market Size, Growth, And Biggest Companies - Zippia](https://substackcdn.com/image/fetch/w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F2e08ed98-18be-4580-88db-f4fa7f58a1b2_800x671.jpeg)